Why AI Projects Stall Between Prototype and Production

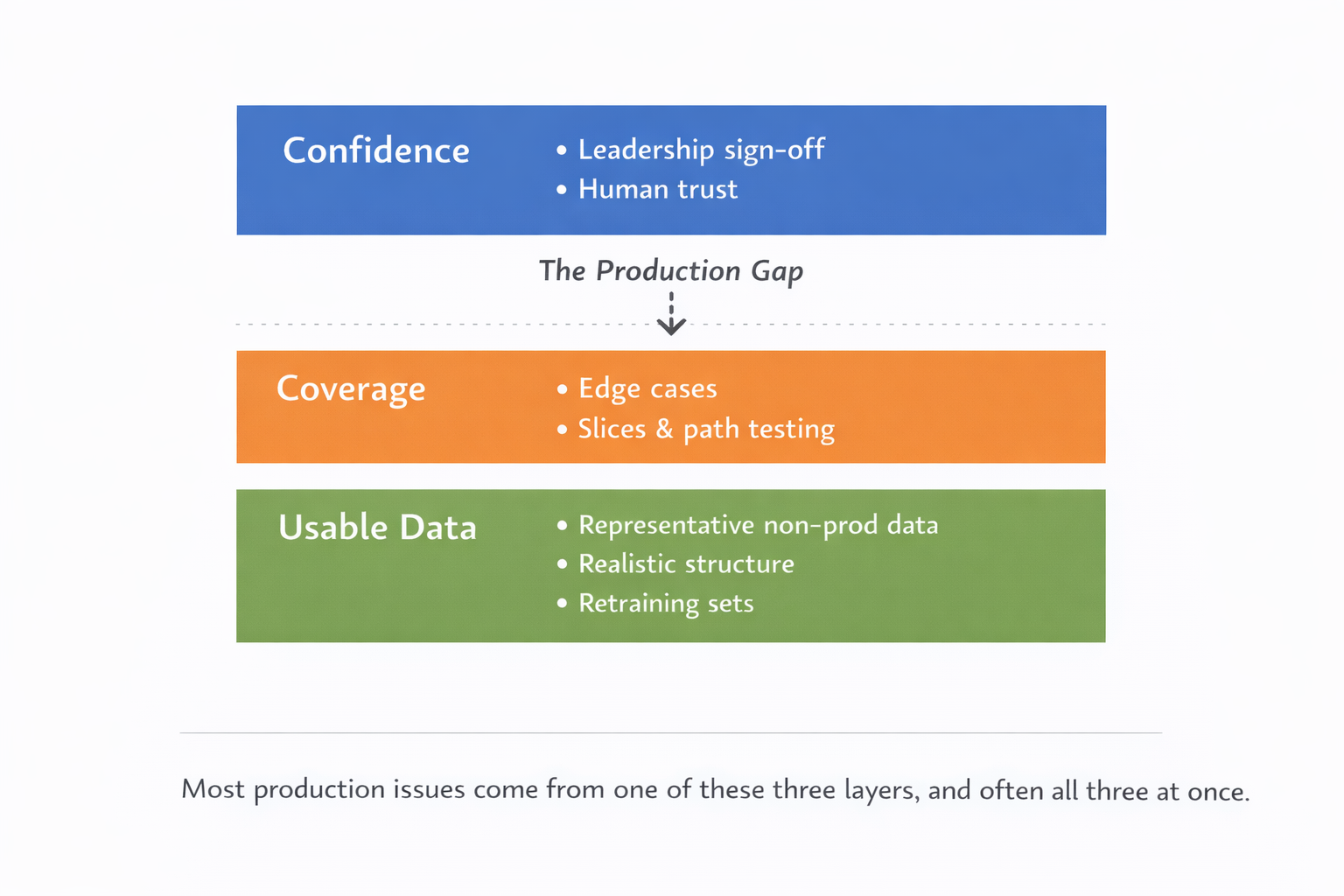

The demo worked. Now leadership wants confidence, coverage, and usable data before it ships to production.

Puneet Anand

Tue Apr 07

Getting a prototype working is not the hard part anymore.

A team can wire up a workflow, call a model, get a decent answer on a sample input, and show something promising in a demo. That part is real progress. But it is also where a lot of AI projects start to fool people. The gap between “it works” and “we can put this in production” is still large, and most organizations are stuck somewhere inside it. McKinsey’s 2025 global survey says nearly nine in ten respondents report regular AI use in at least one business function, but only about one-third say their companies have begun to scale AI programs. McKinsey also says the move from pilots to scaled impact is still a work in progress at most organizations. (McKinsey & Company)

That matches what we keep hearing in customer conversations. Teams are not usually blocked because the model is bad. They are blocked because they cannot prove enough confidence, coverage, and data realism to move from a prototype to something leadership will actually trust in production. In one recent conversation, an engineer said the goal was to get enough confidence to tell leadership, “this solution is working,” and only then push it to production. In the same discussion, that engineer said the real wish was to create the specific scenarios needed to test every path through the workflow.

What changes after the demo

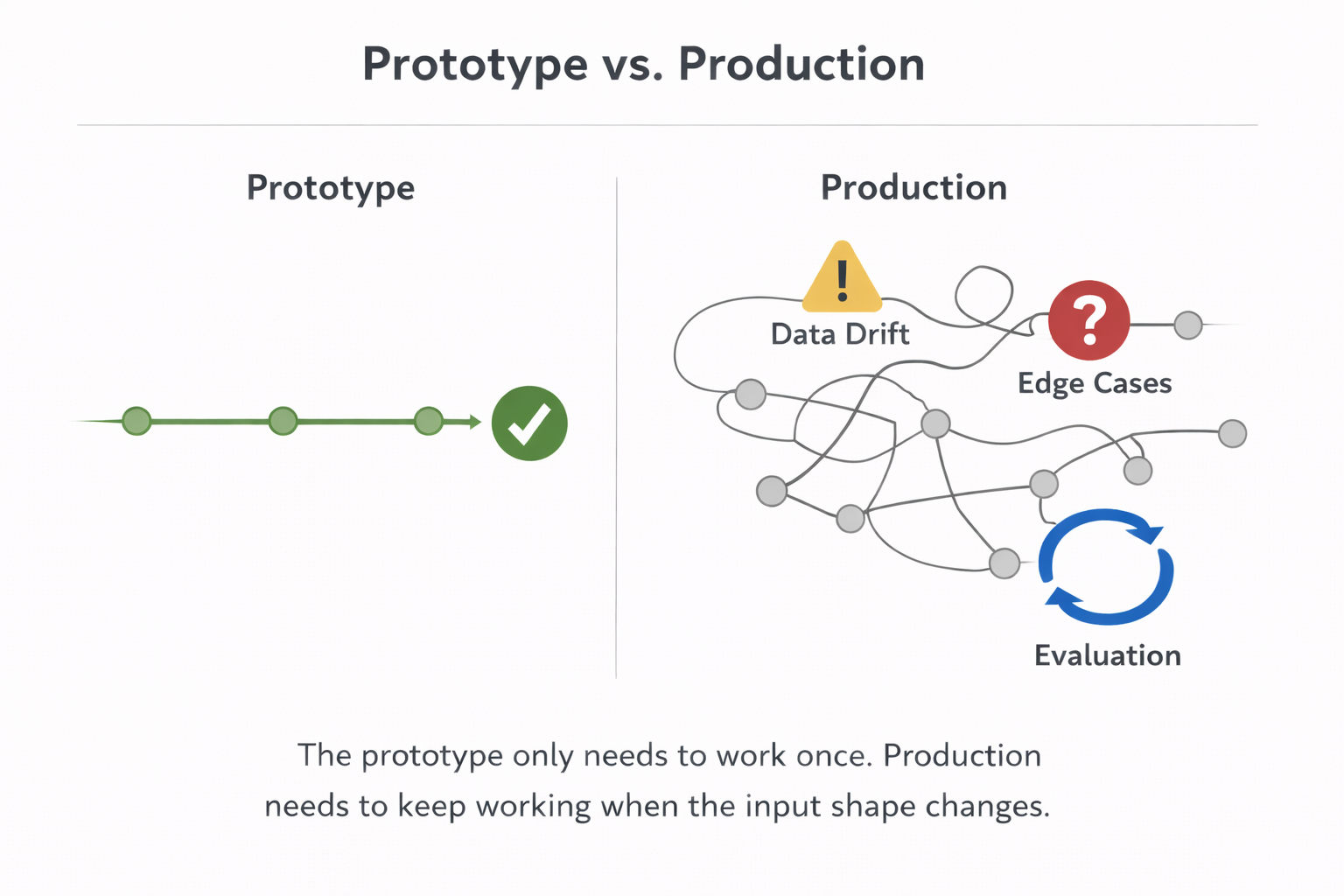

Early on, a prototype only has to show that the idea is possible.

Production has a different job. It has to keep working when the data is messy, when inputs branch in ways nobody wrote down, when edge cases show up, and when the team has to explain why they trust the output enough to attach it to a real business process.

One team we spoke with had already built the workflow logic and was moving toward automation, but described the real problem as “endless possibilities.” Their point was simple. The manual process had accumulated lots of exceptions over time, and once you try to automate it, you need test sets that cover those branches instead of just a clean happy path. Their words were that they needed to “cover everything” and test “every path” that could be traversed.

Another team described a similar problem, but from the evaluation side. The issue was not getting the evaluator to work. The issue was trusting it beyond the prototype. They kept coming back to the same point: until the test and evaluation data covered a broader range of realistic variation, the early results were not enough to create real confidence.

Those are two different projects. The underlying problem is the same.

The first blocker is confidence

This is usually the real gate.

Engineers know a prototype is not production-ready just because it ran correctly ten times in a row. Managers know it too, even if they do not always say it that way. What they want is confidence that the thing will keep behaving when it hits the real shape of the work.

That is why one of the strongest patterns in our calls has nothing to do with model architecture. It is about what someone can defend in front of their own leadership. In one conversation, the engineer said the outcome they wanted was confidence for the team, confidence that the graph or workflow really worked, and confidence to move to the next version.

Public research lines up with that. McKinsey says most organizations are still not embedding AI deeply enough into workflows and processes to realize material enterprise-level benefits, and that redesigning workflows is one of the strongest factors associated with meaningful impact. It also says defined processes for deciding when model outputs need human validation are among the practices that distinguish high performers. (McKinsey & Company)

That matters because confidence is not a feeling. It comes from evidence. And in AI projects, that evidence usually lives in the data, the test coverage, and the evaluation process. In practice that means having a ground truth dataset the team actually agrees on, not just a few examples that happened to pass the demo.

The second blocker is coverage

A lot of teams think they have a model problem when they really have a coverage problem.

The prototype looked good because it only saw a narrow slice of reality. The data used to test it was too clean, too small in the wrong way, or too close to the seed examples that created the prototype in the first place.

The evaluator team mentioned above gave a very clear version of this. Their point was not that they needed millions of rows. Their point was that the quality of the system was “dictated by the diversity of the data set more than anything else.” They said that even 200 examples were enough if they were “good and diverse.”

Google’s own MLOps guidance says almost the same thing in more formal language. It recommends that training, validation, and test splits be representative of the input data, that model quality be tested on important slices rather than only on a global metric, and that data validation checks for schema mismatches, missing values, domain changes, and distribution shifts before retraining or deployment. (Google Cloud Documentation)

This is where a lot of AI projects slow down. The team knows the prototype is fragile, but the missing work is not another prompt tweak. It is building the data that can expose where the system breaks. That is the part of LLM testing most teams skip because it is slower and less satisfying than tuning the model.

The third blocker is usable data

Even when teams know what they need to test, they often cannot get the right data in time.

That came up repeatedly in customer conversations. In one enterprise discussion, the data problem was described as a “big fight,” specifically because the real data lived in production and the team still had to figure out how to create usable non-prod data from it. Data anonymization techniques are usually the first thing teams try. The problem is that anonymization often strips the context that made the data meaningful. Nested relationships might break, domain-specific values get replaced with noise, and the result is technically de-identified but possibly useless for testing. In another conversation, the issue was not lack of data in the abstract. It was that getting data through the internal team that handled it could take about a month or more, which was already being talked about as an upcoming bottleneck elsewhere in the organization.

There is another version of the same problem that shows up in more technical systems. One team told us their data simply could not be randomized. The data was deeply nested, context-sensitive, and versioned. Generic generators were useless because arbitrary values broke the meaning of the data. Their goal was not fake-looking data. It was statistically similar data learned from existing examples so they could train and test without being locked to one customer’s exact dataset.

This is why “just use synthetic data” often sounds shallow to practitioners. The hard part is not generating more rows. The hard part is generating data that still behaves like the real thing.

The fourth blocker is time

Once a project reaches the point where it is failing on edge cases or drift, the cycle can get slow fast.

One data leader we spoke with described a weeks-months long retraining cycle from detecting drift to redeployment. They said the bottleneck was the training phase itself, plus the work needed to analyze what changed, rebuild the training set, rerun the model, test again, and get through approvals. They also said that during this period the model might have to be stopped, which creates a coverage gap.

That is a good example of why AI projects stall after the prototype. The work is no longer model tinkering. It is data analysis, data rebuilding, validation, comparison to the current system, and deciding whether the next version is actually safer to promote. Generating synthetic data for LLM training or retraining sounds like a shortcut, but the hard part is that it has to reflect the drift that actually happened, not just add more of what the model already saw.

Google’s MLOps guidance treats this as a pipeline problem, not a heroics problem. It recommends automated data and model validation in continuous training, plus evaluation against a separate test split and comparison between the candidate model and the current production model before promotion. (Google Cloud Documentation)

What teams that get through this do differently

The pattern across customer calls and public guidance is pretty consistent.

First, they stop treating data as a side input. They treat it as part of the product. That means the team knows what realistic scenarios matter, what slices matter, and what level of coverage is required before anyone asks for production approval.

Second, they stop chasing volume for its own sake. The goal is not a huge dataset. The goal is a representative dataset with enough diversity to surface real failure modes. The evaluator team made that point directly. A few hundred examples can be enough if they are the right ones.

Third, they separate “working from real data” from “copying production data wholesale.” In practice, teams want to preserve structure, constraints, and distributions while making the data usable for testing, evaluation, and retraining. That is why the most credible product promise we have seen in our own work is simple: do not replace the customer’s data, work from it. The internal positioning work that came out of these conversations landed on the same thing. Seed-based, reality-grounded generation matters because technical buyers do not want random output. They want something that keeps the shape of their data intact.

Fourth, they build evaluation around slices and scenarios, not just averages. Google explicitly recommends testing important data slices because global summary metrics can hide fine-grained performance issues. That matches what practitioners told us. The real pain is often in the branch, the exception, or the edge case that was absent from the prototype dataset. (Google Cloud Documentation) That only works when the ground truth data is reliable enough to compare against. A slice-based evaluation built on shaky labels is not more rigorous than a global average. It is just wrong.

Where a tool like DataFramer fits

If the thing blocking production is confidence, then the practical job is not “generate more data” in the abstract.

The job is to help a team start from a few trusted examples and turn them into a broader, more realistic set of test, eval, or training data without breaking the structure that makes the original examples useful.

Most teams shopping for a eval / test data generator run into the same wall: the tools either produce random rows or documents that break the structure of their data, or they require so much configuration they are slower than writing examples by hand.

What most teams actually need from a synthetic data platform is not volume. It is structural fidelity, output that behaves like the real data without being the real data.

That is the common thread behind the internal conversations. One team wanted more diverse inputs so dataset annotation could be done without going back to the legal or data team every time. Another wanted path coverage and pre-labeled realistic scenarios before leadership would trust the workflow. Another wanted data that kept nested structure intact because random values were meaningless. Another wanted a faster way to build the next training set when drift showed up.

That is also why the cleanest product framing is not “synthetic data generation.” It is closer to this: take your own data further. Preserve the shape. Add the missing diversity, coverage, and edge cases. Use it to test, evaluate, and retrain before production punishes you for the gaps.

The short version

AI projects rarely slow down because the first prototype failed.

They stall because the team cannot answer three production questions clearly enough:

- Do we trust this enough to roll it out?

- Have we tested enough of what the real world will throw at it?

- Do we have usable data to keep improving it when the first version falls short?

If those answers are weak, the project slows down. Not because people lost interest, but because they are doing the responsible thing.

That is why the gap between prototype and production is still where so much AI work gets stuck. McKinsey says adoption is broad but scaled impact is still limited. Deloitte says leaders are moving past hype and focusing on use cases with measurable return, plus patience and iteration. Google’s MLOps guidance says the path to production runs through representative data, explicit evaluation, sliced testing, and automated validation. Your own customer conversations say the same thing in plainer language: confidence, coverage, and usable data are what decide whether the project actually ships. (McKinsey & Company)

LLM and Agentic Evaluation: Why Eval Dataset Coverage Matters More Than Size

The issue is rarely too few eval rows. It is eval data that misses the real spread of cases, slices, and failure modes.

Puneet Anand

Puneet Anand Synthetic Data for Test Data Management: Solving AI Development's Hidden Bottleneck

Test data scarcity is the hidden bottleneck blocking AI teams from shipping.

Puneet Anand

Puneet Anand Reality-Grounded Synthetic Data Generation: Why Random Values Break Enterprise AI

Random values can fill a table. They usually cannot preserve how the table behaves.

Puneet Anand

Puneet Anand Get started

Ready to build better AI with better data?

The real bottleneck in AI isn't intelligence. It's the data you can't generate, can't share, or can't trust.